Panajev2001a

GAF's Pleasant Genius

ROV allow you to modify the pipeline’s behaviour to handle OIT in software (shader code: https://docs.microsoft.com/en-us/windows/win32/direct3d11/rasterizer-order-views) which is different from pixel sorting DC did for opaque+punch-through geometry and transparent one ( https://docs.microsoft.com/en-us/previous-versions/ms834190(v=msdn.10) ). This was something they stopped doing on PC designs and future designs, but according to people that worked on its design it was not a big cost for the HW side at all.DirectX12_1 has ROV (Rasterizer Order Views). XBox 360's has ROV-like features since the emulator (Xenia) mapped Xbox 360's certain ROPS feature to DirectX12_1's ROV.

… and it was quite a lot of years ago too.

Not really, you just choose to pick fights for the fun of it / to stand taller, maybe got the feeling of some console warring or someone saying something you disagree with and you start hounding people’s posts with slides and links barely addressing what they are saying until they stop posting.Reciprocal treatment is an easy concept to understand, hence I returned the same serve back to you.

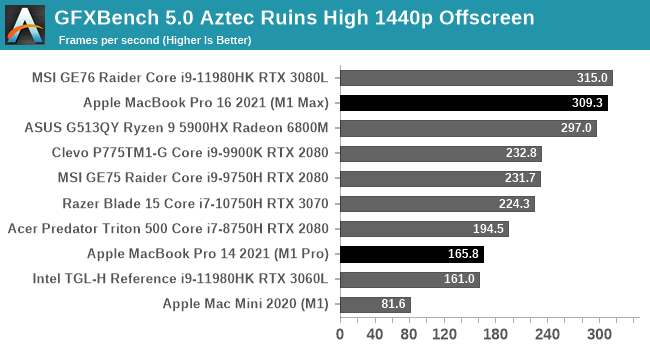

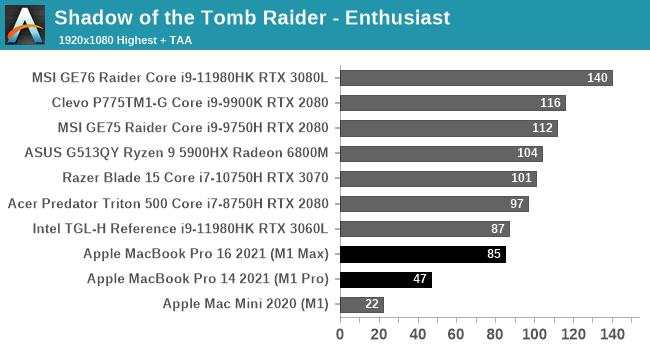

You think TBDR’s are obsolete and Apple’s choice to stick with them is odd if not wrong, I do think they still for now have advantages (especially to reach similar levels of performance as consoles at a much lower power consumption, die size they are not doing too bad trade-offs wise as well considering it is a lot of GPU in there but tons of other HW and embedded caches making up the space). You can do a depth pre-pass and use early-z and Hierarchical Z buffers to reject geometry later to reduce overdraw but that will still burn extra power compared to processing geometry and rendering sorted triangles with invisible ones culled by the HW and the scene already binned in independent tiles (on chip tile bandwidth is easy to keep very high and Apple has been building extensions to do more steps without rendering temporary buffers out to main memory too, and the ability to do the MSAA resolve step before writing out the tile is another bandwidth win… you are free to look at a PC CPU+GPU SoC combo with that memory bandwidth and up to 64 GB of RAM on the package and feel smug, others appreciate the engineering challenge behind something like the M1 Max).

Last edited: