Fast RT hardware is nothing new? The maths behind RT are decades old, but hardware accelerating to the point it's real time? Please show me how it's nothing new, as in, existing hardware.

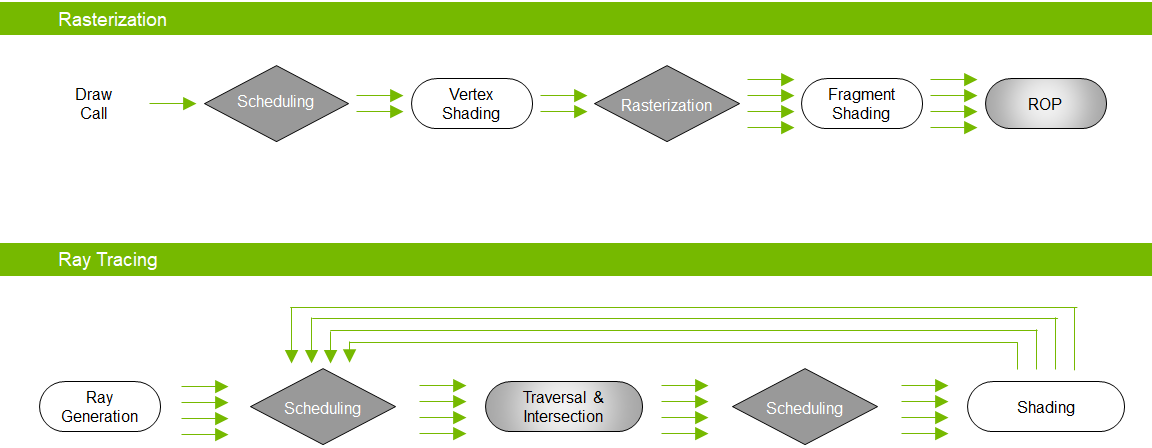

Dedicated chip externally? That was called a CPU as it's basically maths, and it was never fast to the point of being real-time except maybe for the most simple scenes, but never found it's way into gaming. To ACCELERATE this they put the computing on a GPU, which also are kings of parallelization and cheated the typical "hollywood quality RT" algorithms by relying on rasterization. It's the hybrid approach and uses the GPU's FP32 shader units to reach those real-time speeds.

Each object's surfaces are shaded BASED on material properties and the light bounces. So you want to rip the polygon object from the GPU, send it over a slower pipeline (than whatever is within the monolithic block), create the BVH object, calculate the intersections, send it back to GPU to shade correctly. This outsourcing of the BVH so close to the shader pipeline would probably force the algorithm to update all shader parameters every frame for every object which is super inefficient, while Nvidia right now is only updated the shader that changed as everything is stored locally in buffers and looping in the same pipeline.

Let's add a denoising budget of 1ms @ 1080p that has to be ghosting free and temporally stable in games (you know... unpredictable movement) and that denoising is done where? Shader pipeline with temporal solution.

So, to resume :

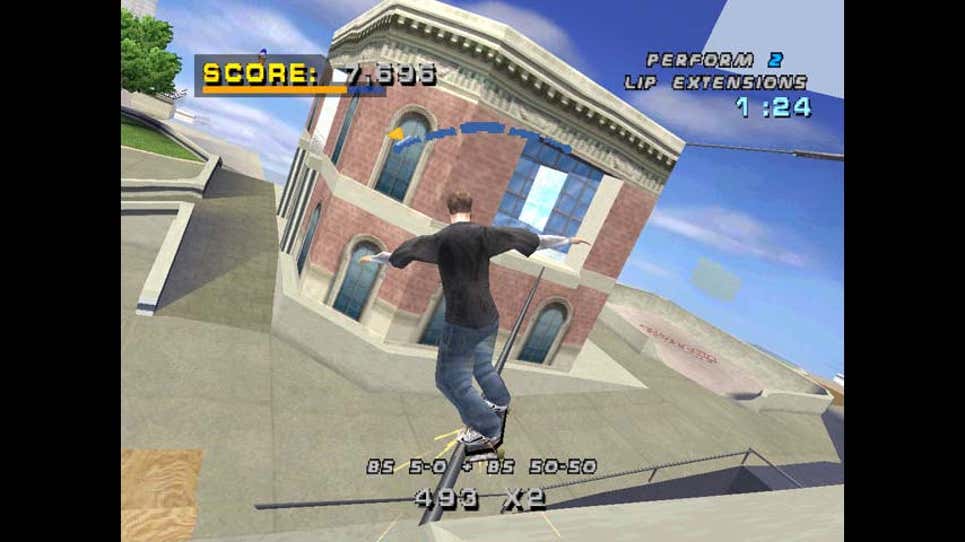

You think back in the days (2002) where games looked like this :

That the industry just wanted to hold back on RT, in a time where freaking cube maps were barely used, baked lighting was often neglected as even that required a lot of time to render and we were at the dawn of programmable shaders? Let's not even get into the appearance of temporal vectors which is years and years beyond the tech we had in those days.

Microsoft who created the DXR consortium got trolled by Nvidia? AMD who was part of the consortium somehow collaborated in a scheme of holding back RT technology? Sony/Nintendo/Microsoft consoles in the last 20 years, not a single engineer said "Very easy real-time RT, let's implement it"?

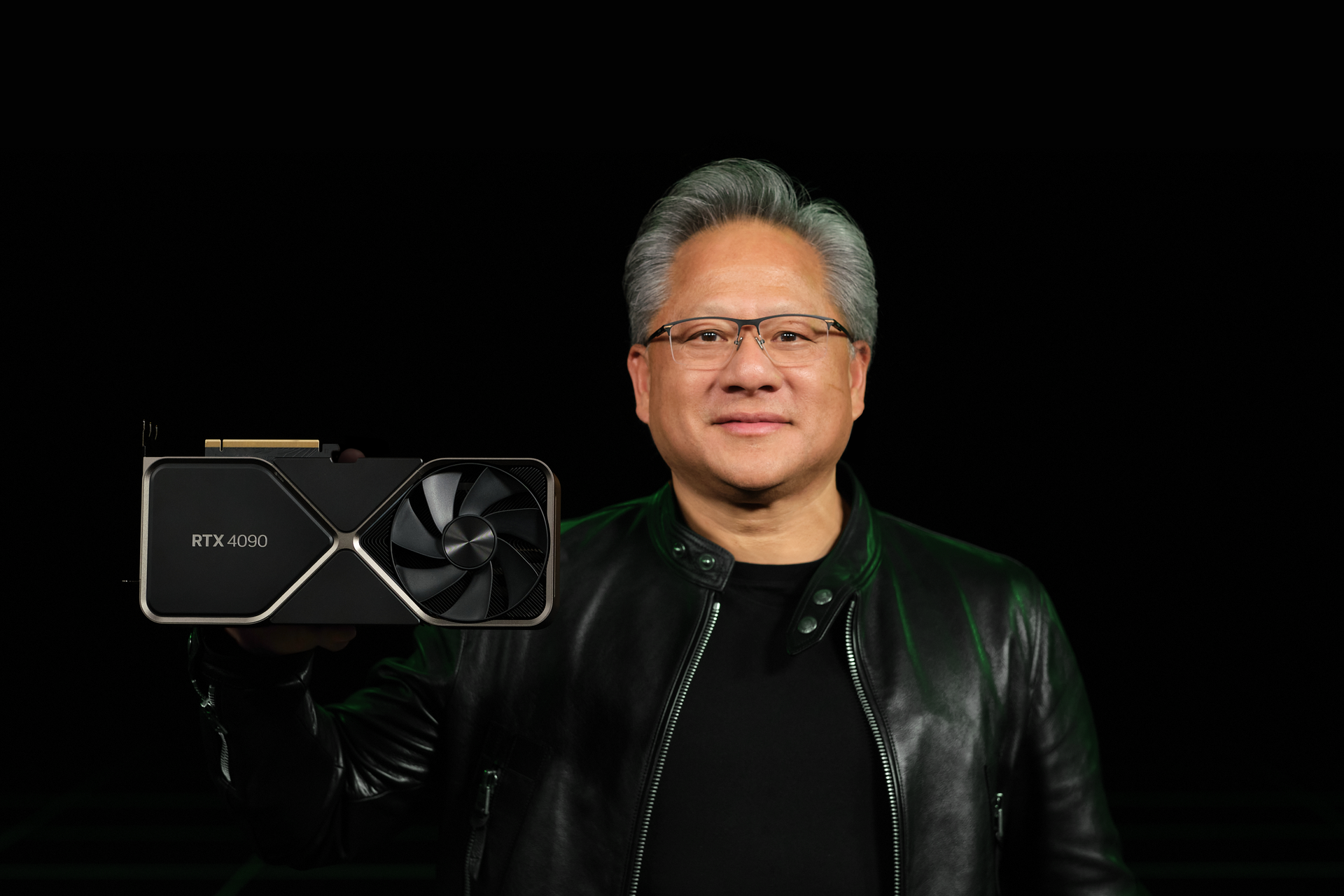

Here comes

lukilladog

lukilladog

though with these vibes :

Saying that multiple billionaire companies just held on tech for.... "selling GPU at premium prices". Companies that work with universities around the world on computer graphic advancements and publish peer reviewed papers that are looked upon by thousands of experts in the field. Everyone, such as lukilladog here on NEOGAF, knew that they were holding back for decades to milk GPU prices, and nobody said a word nor did they take advantage of implementing it for having an edge against competition, like oh.. .say ATI who almost went bankrupt, or AMD that followed suit and barely survives with 1.5-1.8% market shares.

The same AMD who was part of the consortium for DXR 5 years ago, but somehow managed to make a worse RT/CUs performance ratio than Turing, although apparently it's all very easy to make.

Man, what a gold mine you are. Please go publish your ideas in papers. I want you to bring down the GPU monopolies and make them remove their dirty hands around the neck of progress. Can't wait to read.