If you did RT only you could bench Dirt 5 and WoW Shadow lands and come out with RDNA2 > Ampere. Unless NV fixed the issue in Shadowlands that is.

Sure you/we could, and it has been done repeatedly, but everyone ignores or wants to forget the logic behind the discrepancies and review sites did a shit job overall to present what is going on.

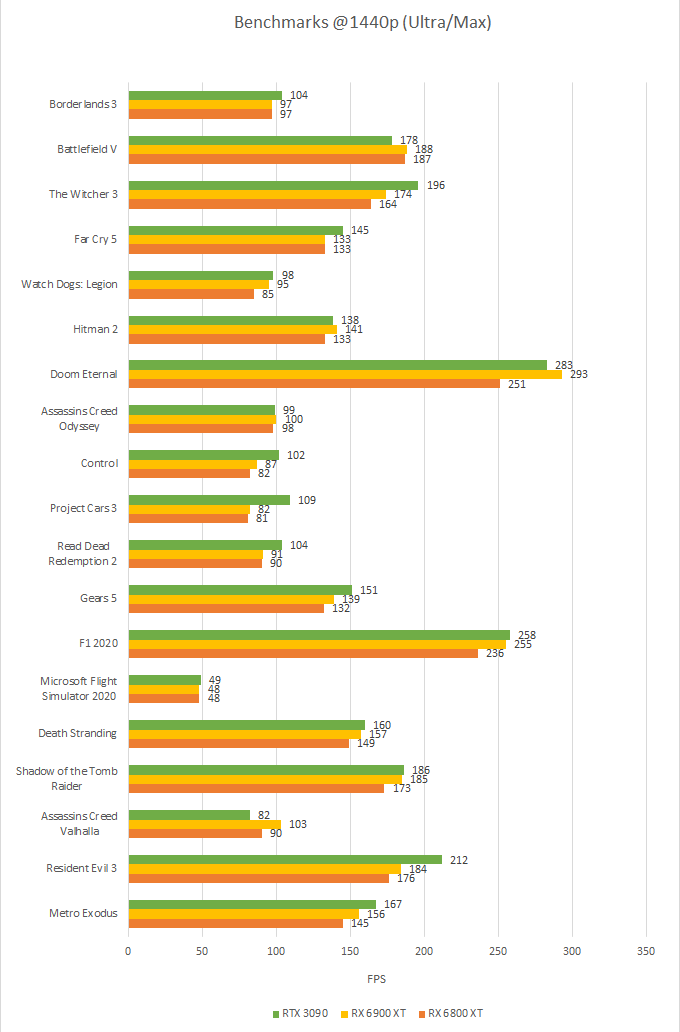

Let's go back in time, when the reviews were dropping :

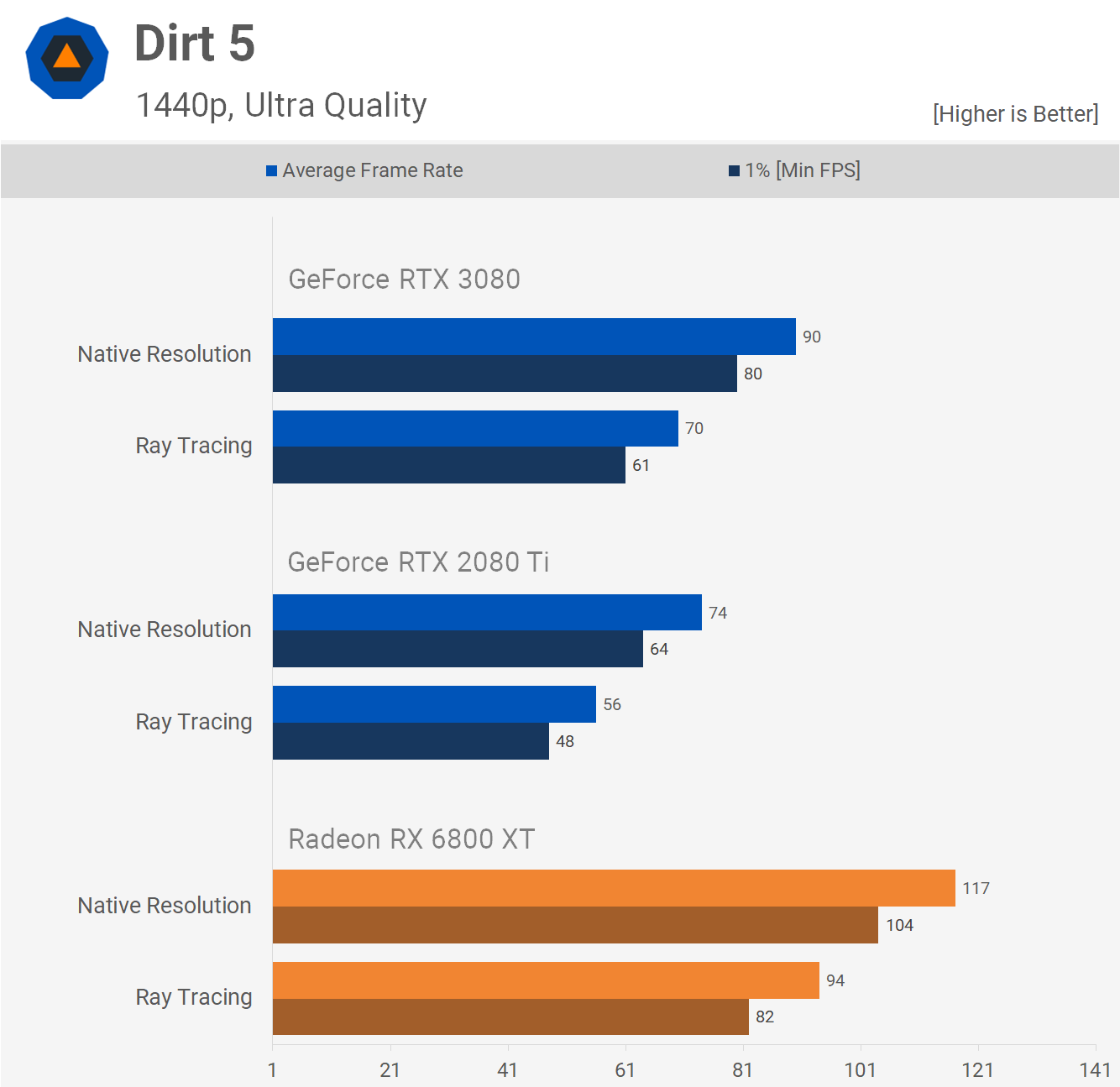

Dirt 5

without RT : 6800XT 30% ahead of the 3080 (and of course, taken at 1440p rather than 4k, it's techspot ..)

Dirt 5

with RT : 6800XT 34% ahead of the 3080

Which kind of make sense for light RT games that only use shadows as RDNA 2 will simply have a couple of CUs disabled for light RT tasks while Nvidia's ASIC philosophy behind RT is not really being worked to it's full potential. A +5% raw RT performance. I think anyone on either camp can understand that optimization can/will make these kind of differences. But the foundation of this game was rotten at launch.

The main outlier of this drastic difference has nothing to do with RT, it's this game's variable shading which is a custom algorithm for RDNA 2 and was borked on Nvidia (until there's a patch..). But more importantly, not a single tech site/youtuber thought that this was worth to put aside for a minute till we figure out why 30% difference is happening, contact the developers and to not include them in averages. Of course not. Since the wave of reviews are now frozen in time and these benchmarks keep perpetuating lousy arguments of RDNA 2 performances on the internet since last fall.

Let's now look what happens when a patch hits?

Well well well..

1440p : +9% without RT, +6% with RT for 6800XT vs 3080

4k : -6% without RT, +1% with RT for 6800XT vs 3080

WOW shadowlands : (Again also with fidelityFX VRS)

You seeing this shit?

2080 TI at the same performance as the 3090, did not raise any red flags? The scaling does not make a fucking sense here. Ain't wasting more time analyzing such a title when the basics of it are so broken to begin with.

Also in some scenes in RE Village @ 4k with RT it eats up the 8GB buffer so the 12GB 3060 vests the 3060Ti and 3070. As does the 6700XT.

Guru 3d

Big difference in memory usage..

But more importantly, the other sites do not show the drop that guru3D showed for the 3060 TI and 3070.

Kitguru too but the image is just way too big..

Those VRAM numbers alone are so different between guru3D and techpowerup.

Guru3D likely let the game run for a long time, because there's a memory leak in this game (found later). The bigger the memory, the longer it takes to hit a performance wall, but also explain guru3d's huge memory difference with other sites. Taking a snapshot of this moment in a benchmark review, is lazy reporting.

So as you rightly point out can cherry pick anything, even 30+ game suites. Personally if the games are popular then the performance is the performance. I also think a few left field picks using various engines/APIs is a good idea to see how well less popular games with less driver support fare.

I think we can all live and accept a few % difference based on game sponsors, it's expected and ultimately, barely anyone would care right? Ideally, there's more choice in the same performance range so that the prices drop, and that we have more offer/choice on the market. What I find aggravating is the lack of critical thinking we find on almost all modern tech sites when they end up with a result that completely diverge from the norm and

they don't fucking come back to it for a correction later! It's pretty shit all around, on both sides to be honest, and these "fanboy ammos" keep appearing in time, way too long, sometimes well beyond a patch or a driver fix.

So these tech sites, rather than questioning the results and asking questions to the devs, they are now basically doing the job that an automated script could be doing for them, just churning out numbers without or barely any discussions or questions. I think actually an AI would write better articles than most tech sites.