Was reading something when I came across an old 3DFX interview and the anouncement of the first ever "GPU", the Geforce 256...

First the NVIDIA press conference...

http://www.firingsquad.com/features/geforce256trip/

Before Dawn, Nalu, Dusk, Luna etc.. there was Wanda!

The first NVIDIA girl!

Can't forget halo....

And now, the 3DFX Damage Control!

http://www.firingsquad.com/features/3dfxinterview/

The mud slinging going back and forth

About the only thing they had going.

Scott Sellers must want to bash his head into the wall every time he comes across a modern video card.... :lol

Yes, the weather is shitty and I'm bored...and hungry...

First the NVIDIA press conference...

http://www.firingsquad.com/features/geforce256trip/

Before Dawn, Nalu, Dusk, Luna etc.. there was Wanda!

The first NVIDIA girl!

Can't forget halo....

And now, the 3DFX Damage Control!

http://www.firingsquad.com/features/3dfxinterview/

The mud slinging going back and forth

Nick Triantos of Nvidia stated that "Full scene AA in any system will cost performance. If you don't believe that, you're being misled by some marketing folks." Can you comment on that in regards to T-Buffer technology?

There is no doubt that full-scene AA is going to cost you fill-rate performance. We have never stated anything to the contrary. What we have said, however, is that with full-scene AA enabled that there is still sufficient fillrate in our next-generation products to sustain 60 fps at 1024x768 resolution with 32bpp pixel depth for many games. It makes sense that Nick would say something like that when he's got to promote a competitive part that doesn't support any anti-aliasing, supports no new T-Buffer cinematic effects, and has such limited fillrate capabilites.

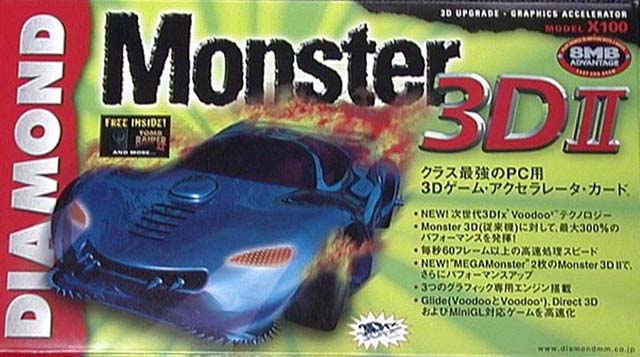

About the only thing they had going.

We believe full-scene AA is going to absolutely reset the image quality standard for next generation 3D accelerators. Once people see their favorite games rendered at real-time frame rates completely anti-aliased, they are never going to be able to look at aliased rendering again (regardless of how many triangles there are in a scene!). Trust us, we've been staring at anti-aliasing algorithms for quite some time now and it's miserable looking at aliased renderings now that we've become so accustomed to the amazing visual quality improvements associated with anti-aliased rendering.

What is your opinion on the term "GPU?" (A GPU by Nvidia's definition is "a single-chip processor with integrated transform, lighting, triangle setup/clipping and rendering engines that is capable of producing a minimum of 10 million polygons per second"

Seems like everyone in this business is trying to come up with new and cool marketing name for new technology. I think nvidia's definition of a "GPU" is somewhat arbitrary and clearly slanted to GeForce's features. Certainly I don't think you'll see the term "GPU" become the de facto naming convention for on-chip geometry acceleration, however.

Scott Sellers must want to bash his head into the wall every time he comes across a modern video card.... :lol

Yes, the weather is shitty and I'm bored...and hungry...