I remember that. As it stands right now, RTX Tensor Cores just sit there in idle doing nothing if RT is off and are unusable for anything else. I wonder if AMD will have more flexibility out of the gate, like a unified process where devs can pick and choose so they don't have wasted silicon if RT is not needed as much in certain situations, and they can push shaders or machine learning through them, etc..

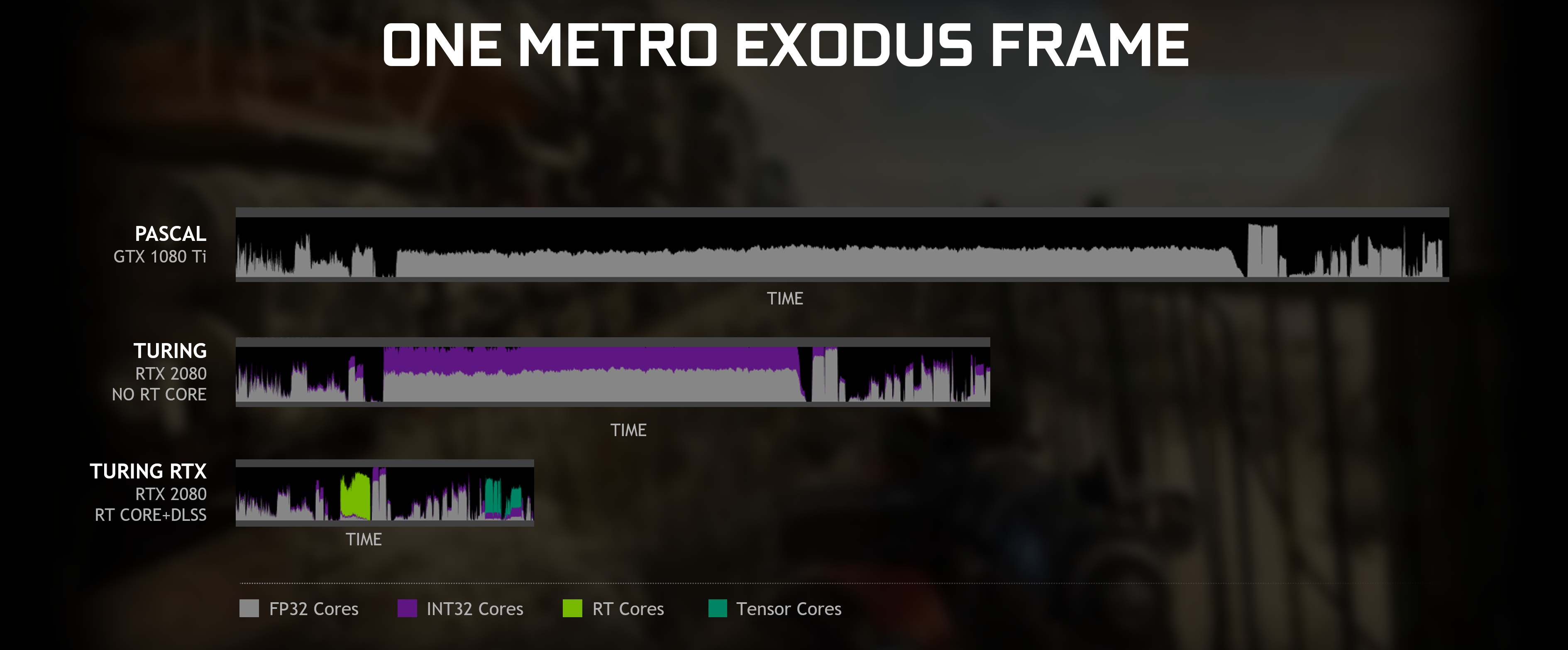

RT cores and Tensor cores are completely different and separate things. You can still use DLSS without RT (which nobody actually does as the cards already do 4K60), or you can use DLSS along with RT to improve the overall performance. Or you can use RT alone as well, just with worse performance/at lower resolution.

All that being said, I'm very curious how the RT implementation and performance in next-gen consoles will turn out - 40K30 is a given, let's be realistic and simply forget all the 8K and 120FPS PR BS, it ain't gonna happen - I can see MS opting for 4K60 in their 1st party games like they already do, since they are fast-paced and/or heavily MP-focused, but I don't expect wonders from 3rd party devs, with the exception of games that already do 60FPS like BF, CoD, FIFA etc, and Sony 1st party cinematic/immersive games possibly still sitting on 4K30 in favor of even better graphics, animations, physics etc. But even if we consider that all games will be 4K60 - that's still rasterization we are talking about, not RT. So the question is - if there will be enough hardware RT performance for 4K? Or will it be restricted to FullHD? Because with the mid-gen refreshes and multiple performance modes within the games, I can imagine next-gen titles having multiple graphics/performance profiles like this :

-8K 30FPS,

-4K 60FPS,

-FHD 120FPS,

-FHD RT 60FPS,

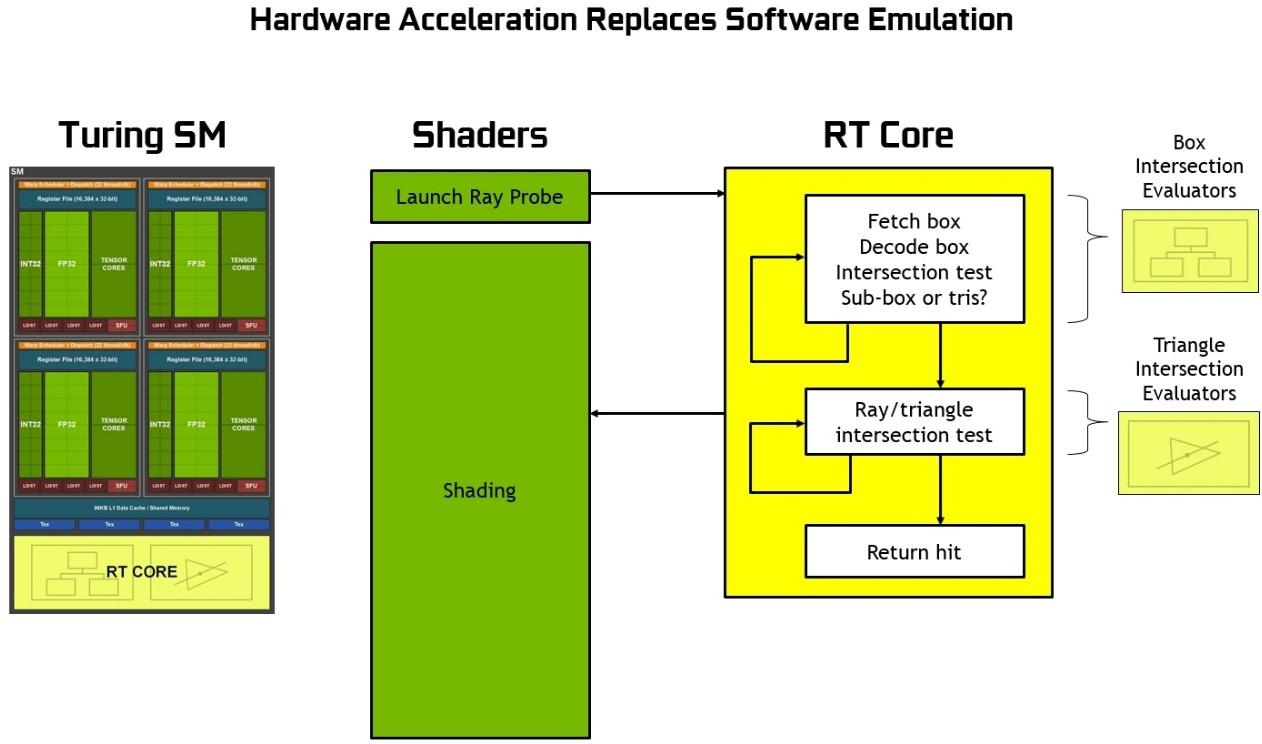

That's the only option I can see them pulling the 8K or 120FPS BTW. But given how even the most powerful GPUs on the planet barely handle RT at FHD 60, it's really hard for me to imagine next-gen consoles doing more than that, especially given how behind AMD is compared to NV, and that it will be their first RT implementation to begin with. But who knows, maybe they will do it better, never say never - like you said, there's a lot of wasted silicon on the RTX cards, putting more RT cores instead of Tensor cores could greatly increase the RT performance,. And since RT offloads so much of the actually heaviest calculations from CUDA cores, the latter are also sitting somewhat in idle, while some chunk of them could also be replaced with RT cores, for even more RT performance. I just cannot fight the feeling NV screwed up big times when balancing the Turing architecture, so yeah, there is a chance AMD will learn from their mistakes, especially given the consoles are closed architecture which makes everything so much simpler due to no market fragmentation.

The other option I can see for RT, is the games still heavily relying on sub-4K resolutions with upscalled/dynamic/reconstructed/checkerboarded solutions, so having just enough RT performance for 1440-1600p, and then bumping the image to "4K".

However, with that said, doubling down on cooling does seem to be a key aspect of the dev kit design - more so than anything we've seen before.

How so? Correct me if I'm wrong, but according to my simple logic, because of that V-shape the console is losing sooo much functional space in the middle, where a beefy radiator or fan could be put. The very first thing that caught my attention when the dev-kit design was leaked some time ago was exactly the lack of space for the fan, hence I immediately said to myself "this is 100% fake". You can surely use sophisticated fins and heatpipes design in that V-shaped body, but what about the fan? Will they use some sort of laptop-grade screaming tiny fan(s)? Well, as 100+ MLN sold consoles show, you can easily get away with it I guess. But then again, the PS dev-kits almost never resembled the consumer product, not even close.